LocalAI

一款开源、免费、本地优先的 AI 推理框架,核心是提供与 OpenAI(同时兼容 Elevenlabs、Anthropic 等)API 规范兼容的本地推理 REST API,支持在消费级硬件上本地 / 私有部署大语言模型(LLM)、生成图片、音频等能力,无需依赖 GPU 也可运行,覆盖多类模型家族。

核心特性

-

API 兼容:作为 OpenAI API 的即插即用替代品,现有基于 OpenAI API 开发的软件可低成本迁移; -

本地部署:支持在消费级硬件(无 GPU 也可)上运行,数据无需外发,满足隐私 / 合规需求; -

多能力支持:不仅支持大语言模型推理,还涵盖图片生成、音频(TTS 等)生成等能力; -

模型生态丰富:内置 Model Gallery(模型画廊),支持通过 CLI/API 便捷安装 HuggingFace 等来源的模型,默认提供自由授权的模型库,也支持自定义画廊仓库; -

多后端兼容:底层兼容多种后端(C++、Go、Python 等实现),适配不同模型的运行需求; -

扩展能力:关联 LocalAGI(AI 代理编排)、LocalRecall(知识库)、Cogito(LLM 工作流库)等工具,形成完整的本地 AI 基础设施套件。

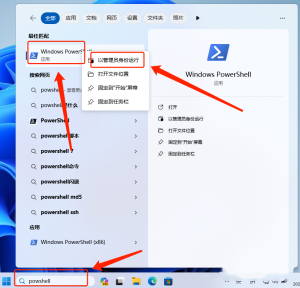

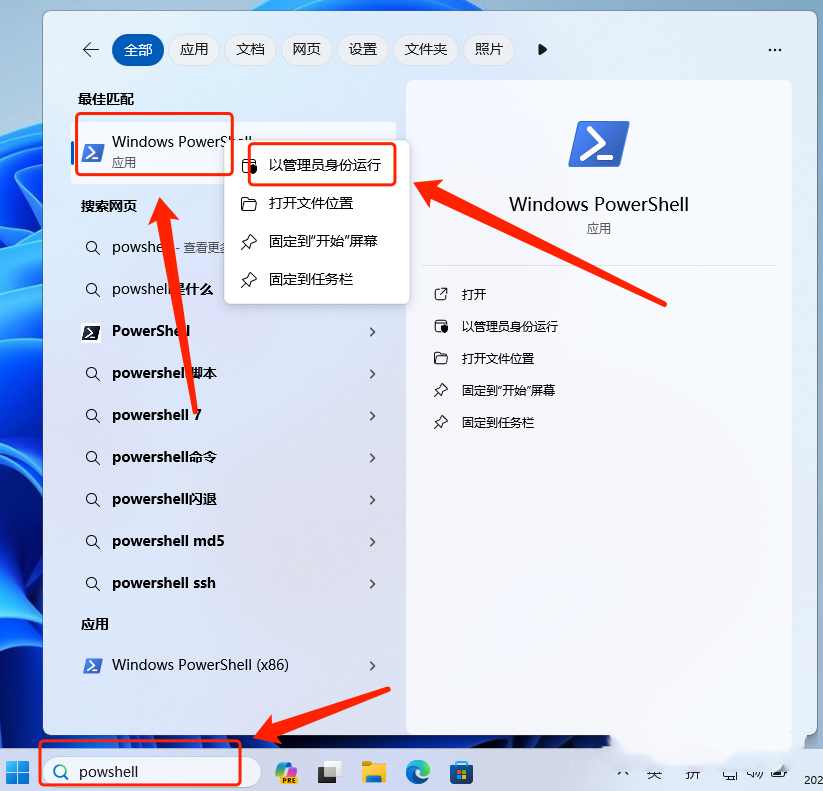

安装

Docker Compose(纯 CPU 版本)

services:

localai:

image: localai/localai:latest

container_name: localai

ports:

- 8080:8080

volumes:

- ./models:/models

restart: always使用

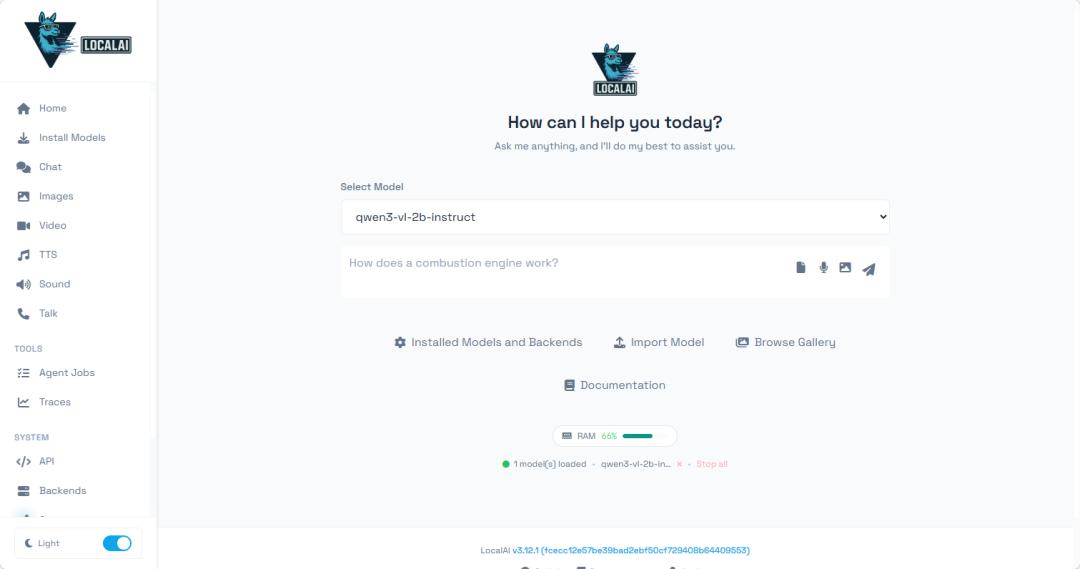

浏览器中输入 http://NAS的IP:8080 就能看到界面

引导页面有介绍怎么样上手使用,首先浏览模型,选中需要的下载安装,最后调用进行会话

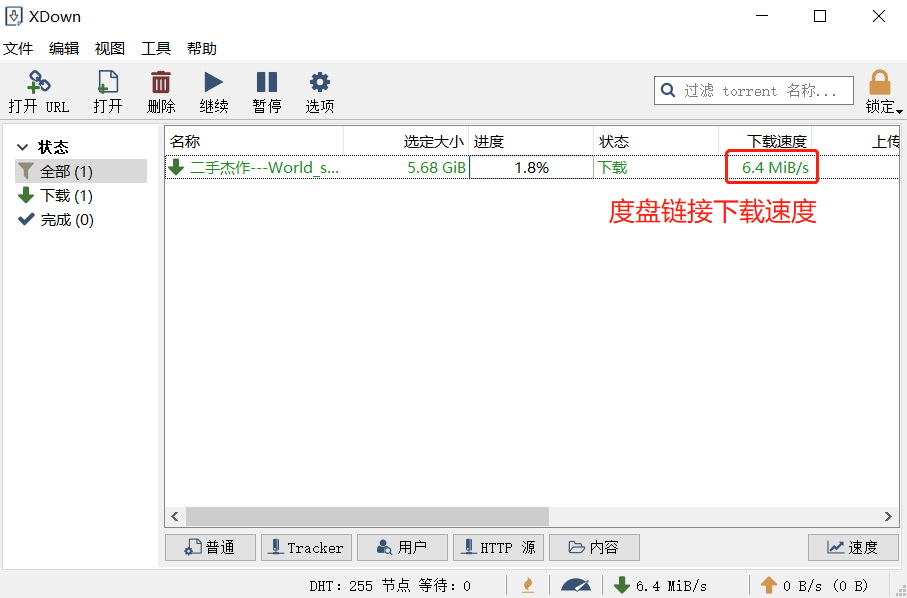

提供了挺多的模型的,都是可以直接下载(有时需要良好网络,也遇到过加载不出模型的情况,过了一天又行了)

TIP:支持离线模型上传,放到模型目录即可

根据需求筛选模型,注意下载的模型类型

点击下载安装(因为是用于 CPU 跑,就挑选模型比较小的)

TIP:下载模型有可能提示网络问题,要良好网络

安装完成,来到对话页面

输入文字就能对话了,生成速度不是很快只有 3.4 tokens/s,但是体验也还不错

毕竟模型比较小,回答质量肯定是不如大模型的,但不需要联网可以本地运行

调用协议方面,支持 OpenAI(同时兼容 Elevenlabs、Anthropic 等)API 规范

如果有集群的需求,可以部署多台设备混合推理加速

还有很多功能,我就不详细介绍了有兴趣的可以自行探索

资源占用情况,2b 的模型大概占用 3.5G 内存,生成时 CPU 基本可以跑满

总结

LocalAI 整体体验十分友好,可视化界面大幅降低了新手入门门槛。它支持完全本地化部署,断网环境下也能生成速度与质量俱佳的内容;仅靠纯 CPU 即可推理运行,无需依赖 GPU;同时兼容 OpenAI API 规范,现有应用可无缝迁移;模型支持 GGUF 格式,能快速下载各大厂商最新模型。对于需要轻量化本地 AI 推理、想快速体验大模型但缺乏高端硬件的用户,LocalAI 是一款非常值得尝试的工具。

综合推荐:⭐⭐⭐⭐(可视化操作友好,接口兼容 OpenAI API 规范)

使用体验:⭐⭐⭐(界面操作易上手,扩展兼容不错)

部署难易:⭐⭐(简单)

声明

本文翻译整理自:

•https://github.com/wysstartgo/LocalAI•https://docs.flowiseai.com/embeddings/localai-embeddings

相信如果认真阅读了本文您一定会有收获,喜欢本文的请点赞、收藏、转发,这样将给笔者带来更多的动力!

References

[1] LocalAI Embeddings – FlowiseAI: https://docs.flowiseai.com/embeddings/localai-embeddings

[2] : https://github.com/go-skynet/LocalAI/actions/workflows/test.yml

[3] : https://github.com/go-skynet/LocalAI/actions/workflows/image.yml

[4] : https://discord.gg/uJAeKSAGDy

[5] 模型兼容性表: https://localai.io/model-compatibility/index.html#model-compatibility-table

[6] 请参见构建说明: https://localai.io/basics/build/index.html

[7] Ettore Di Giacinto: https://github.com/mudler/

[8] 示例: https://github.com/go-skynet/LocalAI/tree/master/examples/

[9] ChatGPT OSS 替代品: https://github.com/go-skynet/LocalAI/tree/master/examples/chatbot-ui

[10] 图像生成: https://localai.io/api-endpoints/index.html#image-generation

[11] Telegram 机器人: https://github.com/go-skynet/LocalAI/tree/master/examples/telegram-bot

[12] Flowise: https://github.com/go-skynet/LocalAI/tree/master/examples/flowise

[13] 入门指南: https://localai.io/basics/getting_started/index.html

[14] 示例: https://github.com/go-skynet/LocalAI/tree/master/examples/

[15] 模型库: https://localai.io/models/

[16] llama.cpp: https://github.com/ggerganov/llama.cpp

[17] GPT4ALL-J: https://github.com/nomic-ai/gpt4all

[18] cerebras-GPT with ggml: https://huggingface.co/lxe/Cerebras-GPT-2.7B-Alpaca-SP-ggml

[19] 这里: https://github.com/ggerganov/llama.cpp#memorydisk-requirements

[20] llama: https://github.com/ggerganov/llama.cpp

[21] binding: https://github.com/go-skynet/go-llama.cpp

[22] gpt4all-llama: https://github.com/nomic-ai/gpt4all

[23] gpt4all-mpt: https://github.com/nomic-ai/gpt4all

[24] gpt4all-j: https://github.com/nomic-ai/gpt4all

[25] falcon: https://github.com/ggerganov/ggml

[26] binding: https://github.com/go-skynet/go-ggml-transformers.cpp

[27] gpt2: https://github.com/ggerganov/ggml

[28] binding: https://github.com/go-skynet/go-ggml-transformers.cpp

[29] dolly: https://github.com/ggerganov/ggml

[30] binding: https://github.com/go-skynet/go-ggml-transformers.cpp

[31] gptj: https://github.com/ggerganov/ggml

[32] binding: https://github.com/go-skynet/go-ggml-transformers.cpp

[33] mpt: https://github.com/ggerganov/ggml

[34] binding: https://github.com/go-skynet/go-ggml-transformers.cpp

[35] replit: https://github.com/ggerganov/ggml

[36] binding: https://github.com/go-skynet/go-ggml-transformers.cpp

[37] gptneox: https://github.com/ggerganov/ggml

[38] binding: https://github.com/go-skynet/go-ggml-transformers.cpp

[39] starcoder: https://github.com/ggerganov/ggml

[40] binding: https://github.com/go-skynet/go-ggml-transformers.cpp

[41] bloomz: https://github.com/NouamaneTazi/bloomz.cpp

[42] binding: https://github.com/go-skynet/bloomz.cpp

[43] rwkv: https://github.com/saharNooby/rwkv.cpp

[44] binding: https://github.com/donomii/go-rw

[45] bert: https://github.com/skeskinen/bert.cpp

[46] binding: https://github.com/go-skynet/go-bert.cpp

[47] whisper: https://github.com/ggerganov/whisper.cpp

[48] stablediffusion: https://github.com/EdVince/Stable-Diffusion-NCNN

[49] binding: https://github.com/mudler/go-stable-diffusion

[50] langchain-huggingface: https://github.com/tmc/langchaingo

[51] Vicuna: https://github.com/ggerganov/llama.cpp/discussions/643#discussioncomment-5533894

[52] Alpaca: https://github.com/ggerganov/llama.cpp#instruction-mode-with-alpaca

[53] GPT4ALL: https://gpt4all.io/

[54] 使用 GPT4All: https://github.com/ggerganov/llama.cpp#using-gpt4all

[55] GPT4ALL-J: https://gpt4all.io/models/ggml-gpt4all-j.bin

[56] Koala: https://bair.berkeley.edu/blog/2023/04/03/koala/

[57] WizardLM: https://github.com/nlpxucan/WizardLM

[58] RWKV: https://github.com/BlinkDL/RWKV-LM

[59] rwkv.cpp: https://github.com/saharNooby/rwkv.cpp

[60] bloom.cpp: https://github.com/NouamaneTazi/bloomz.cpp

[61] Chinese LLaMA / Alpaca: https://github.com/ymcui/Chinese-LLaMA-Alpaca

[62] Vigogne (French): https://github.com/bofenghuang/vigogne

[63] OpenBuddy 🐶 (多语言): https://github.com/OpenBuddy/OpenBuddy

[64] Pygmalion 7B / Metharme 7B: https://github.com/ggerganov/llama.cpp#using-pygmalion-7b–metharme-7b

[65] HuggingFace Inference: https://huggingface.co/inference-api

[66] README: https://github.com/ggerganov/llama.cpp#using-gpt4all

[67] examples: https://github.com/go-skynet/LocalAI/tree/master/examples/rwkv

[68] 发布说明: https://localai.io/basics/news/index.html#-19-06-2023-__v1190__-

[69] 更新日志: https://github.com/go-skynet/LocalAI/releases/tag/v1.19.0

[70] 发布说明: https://localai.io/basics/news/index.html#-06-06-2023-__v1180__-

[71] https://localai.io: https://localai.io/

[72] 专门的新闻栏目: https://localai.io/basics/news/index.html

[73] @LocalAI_API: https://twitter.com/LocalAI_API

[74] @mudler_it: https://twitter.com/mudler_it

[75] Hacker news post: https://news.ycombinator.com/item?id=35726934

[76] good-first-issue: https://github.com/go-skynet/LocalAI/issues?q=is%3Aissue+is%3Aopen+label%3A%22good+first+issue%22

[77] help-wanted: https://github.com/go-skynet/LocalAI/issues?q=is%3Aissue+is%3Aopen+label%3A%22help+wanted%22

[78] 入门指南: https://localai.io/basics/getting_started/index.html

[79] 构建LocalAI: https://localai.io/basics/build/index.html

[80] 构建部分: https://localai.io/basics/build/index.html

[81] 安装说明: https://localai.io/basics/getting_started/index.html#run-localai-in-kubernetes

[82] 支持的 API 端点列表: https://localai.io/api-endpoints/index.html

[83] FAQ: https://localai.io/faq/index.html

[84] Kairos: https://github.com/kairos-io/kairos

[85] k8sgpt: https://github.com/k8sgpt-ai/k8sgpt#running-local-models

[86] Spark: https://github.com/cedriking/spark

[87] autogpt4all: https://github.com/aorumbayev/autogpt4all

[88] Mods: https://github.com/charmbracelet/mods

[89] Flowise: https://github.com/FlowiseAI/Flowise

[90] Possible to use it without docker? · Issue #6 · go-skynet/LocalAI · GitHub: https://github.com/go-skynet/LocalAI/issues/6

[91] Go bindings · Issue #351 · ggerganov/llama.cpp · GitHub: https://github.com/ggerganov/llama.cpp/issues/351

[92] gpt4all: https://github.com/go-skynet/LocalAI/issues/85

[93] GitHub – ggerganov/whisper.cpp: Port of OpenAI’s Whisper model in C/C++: https://github.com/ggerganov/whisper.cpp

[94] feature: GPU/CUDA support? · Issue #69 · go-skynet/LocalAI · GitHub: https://github.com/go-skynet/LocalAI/issues/69

[95] Ettore Di Giacinto: https://github.com/mudler/

[96] llama.cpp: https://github.com/ggerganov/llama.cpp

[97] GitHub – tatsu-lab/stanford_alpaca: Code and documentation to train Stanford’s Alpaca models, and generate the data.: https://github.com/tatsu-lab/stanford_alpaca

[98] GitHub – cornelk/llama-go: Port of Facebook’s LLaMA (Large Language Model Meta AI) in Golang with embedded C/C++: https://github.com/cornelk/llama-go

[99] GitHub – antimatter15/alpaca.cpp: Locally run an Instruction-Tuned Chat-Style LLM: https://github.com/antimatter15/alpaca.cpp

[100] GitHub – EdVince/Stable-Diffusion-NCNN: Stable Diffusion in NCNN with c++, supported txt2img and img2img: https://github.com/EdVince/Stable-Diffusion-NCNN

[101] GitHub – ggerganov/whisper.cpp: Port of OpenAI’s Whisper model in C/C++: https://github.com/ggerganov/whisper.cpp

[102] GitHub – saharNooby/rwkv.cpp: INT4/INT5/INT8 and FP16 inference on CPU for RWKV language model: https://github.com/saharNooby/rwkv.cpp

![图片[2]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13825)

![图片[3]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13826)

![图片[4]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13827)

![图片[5]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13828)

![图片[6]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13829)

![图片[7]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13830)

![图片[8]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13831)

![图片[9]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13832)

![图片[10]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13833)

![图片[11]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13834)

![图片[12]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13835)

![图片[13]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13836)

![图片[14]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13837)

![图片[15]-家里 NAS 变身AI助手!纯 CPU 运行大模型,不用联网也能聊天-寻找资源网](http://img.seekresource.com/img/13838)

![表情[nanguo]-寻找资源网](http://www.seekresource.com/wp-content/themes/zibll/img/smilies/nanguo.gif)

![表情[haobang]-寻找资源网](http://www.seekresource.com/wp-content/themes/zibll/img/smilies/haobang.gif)

![表情[shuai]-寻找资源网](http://www.seekresource.com/wp-content/themes/zibll/img/smilies/shuai.gif)

![表情[deyi]-寻找资源网](http://www.seekresource.com/wp-content/themes/zibll/img/smilies/deyi.gif)

![表情[chi]-寻找资源网](http://www.seekresource.com/wp-content/themes/zibll/img/smilies/chi.gif)

暂无评论内容